Latency problems are harder because the speed of light is fixed; you can’t bribe God—David Clark

In recent years, while network bandwidth has increased significantly, the kernel's packet processing capability has not seen a corresponding improvement. To further boost bandwidth and reduce latency, technologies such as RDMA and DPDK have emerged, bypassing the kernel to handle packets directly. This article introduces current RDMA technologies, provides comparisons, and discusses common issues.

Contents

High-Level Overview

RDMA technology essentially offloads the transport layer to the network card, bypassing the kernel for packet processing and removing the CPU from the critical path. As the name implies, this allows a device to write directly into the virtual memory of another device, achieving transmission latencies of around 10 milliseconds in controlled environments. However, traditional RDMA has two major drawbacks:- Requires a lossless network that supports RDMA, such as InfiniBand.

- Consequently, additional InfiniBand switches and compatible network cards must be deployed in the environment.

Why Not Use RDMA on Ethernet?

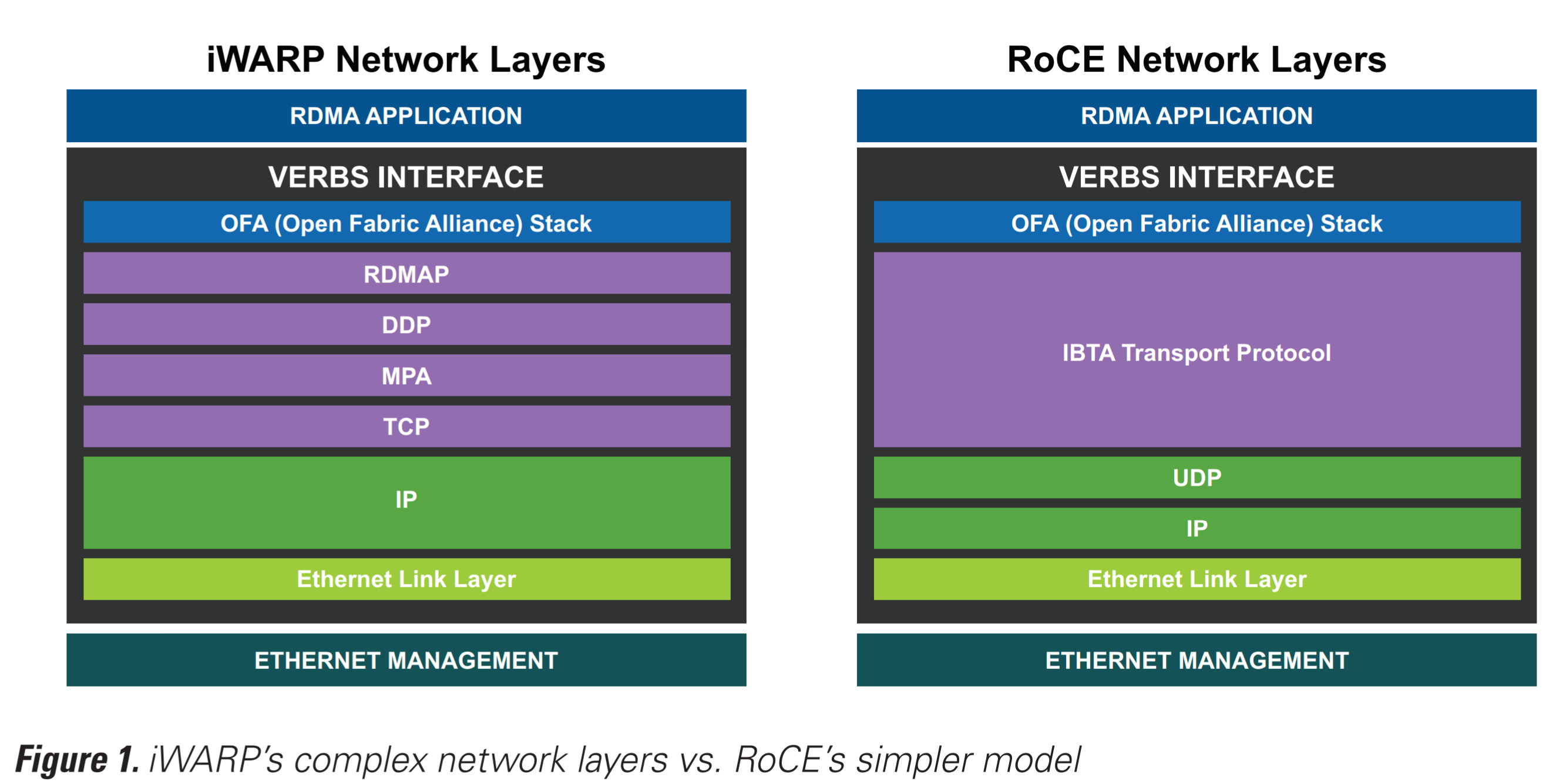

The good news is that RDMA can indeed run over Ethernet. Currently, RDMA technologies for Ethernet are divided into two major camps: RoCE (RDMA over Converged Ethernet) and iWARP (Internet Wide Area RDMA Protocol).RoCE was proposed by Mellanox, while iWARP is primarily supported by Intel and Chelsio. However, both of these major technologies currently have some significant drawbacks.

RoCE vs. iWARP

On the other hand, RoCE uses the UDP protocol, making it somewhat faster than iWARP, which uses TCP/IP. The trade-off is that it still requires a lossless network to achieve optimal performance; while it can still be used in a lossy network, performance is significantly compromised. This led to the introduction of PFC (Priority Flow Control) to achieve a lossless network over Ethernet. To deploy PFC at scale in environments like datacenters without VLANs, Microsoft designed DSCP-based PFC, allowing PFC to operate at Layer 3. Additionally, Microsoft published a paper at SIGCOMM’16 discussing issues encountered in large-scale RoCE deployments, including livelock, deadlock, and pause frame storms. While a lossless network ensures RoCE performance, its complex configuration creates challenges for network operators.

RDMA vs. DPDK

Like RDMA, DPDK can bypass the kernel and uses a polling mechanism to retrieve packets, thereby reducing CPU overhead. Mellanox argues that RDMA is still superior to DPDK because DPDK still performs all packet processing in user space, whereas RDMA offloads part of it to the NIC. However, some argue that Mellanox's reasoning is flawed because RDMA also requires a choice between busy polling and event-triggered modes; the former consumes more CPU resources, while the latter results in higher latency.Conclusion

Numerous reports indicate that RDMA offers significant performance gains over TCP. However, the primary challenges lie in programmability, security, deployment, and operations. The management complexity of RDMA continues to make large-scale deployment difficult. Ultimately, it comes down to the old adage: there is no single best solution, only the most suitable one. How much we must sacrifice to achieve these performance gains, and whether it is worth it, is a question that will have a different answer for every deployment.Reference

- https://shelbyt.github.io/rdma-explained-1.html

- http://www.mellanox.com/related-docs/whitepapers/WP_heavyreading-NFV-performance.pdf

- http://www.mellanox.com/related-docs/whitepapers/WP_RoCE_vs_iWARP.pdf

- https://www.microsoft.com/en-us/research/wp-content/uploads/2016/11/rdma_sigcomm2016.pdf

Copyright Notice: All articles on this blog are licensed under CC BY-NC-SA 4.0 unless otherwise stated.