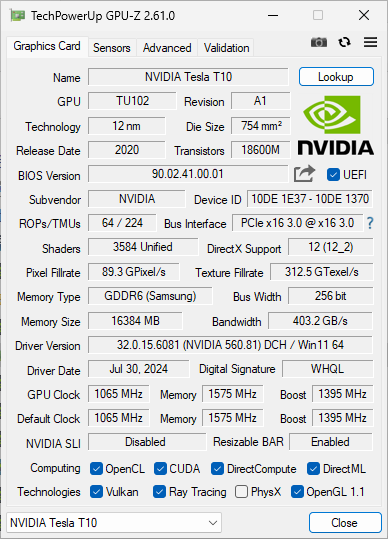

Recently, while browsing China's Xianyu platform, I stumbled upon a unique graphics card – the Tesla T10. Originating from professional data centers, this GPU was designed by NVIDIA specifically for cloud gaming services, primarily used in GeForce NOW servers. These retired cards have now entered the second-hand market, currently selling on Xianyu for about 1,350 RMB (approx. $190 USD). Due to the low price, I purchased two to evaluate their performance.

Deploying Prometheus Monitoring System on Arista Switches

Overview: This article explains how to run node_exporter and snmp_exporter using Docker containers on Arista switches to monitor switch status via Prometheus.

- Cloud

- ...

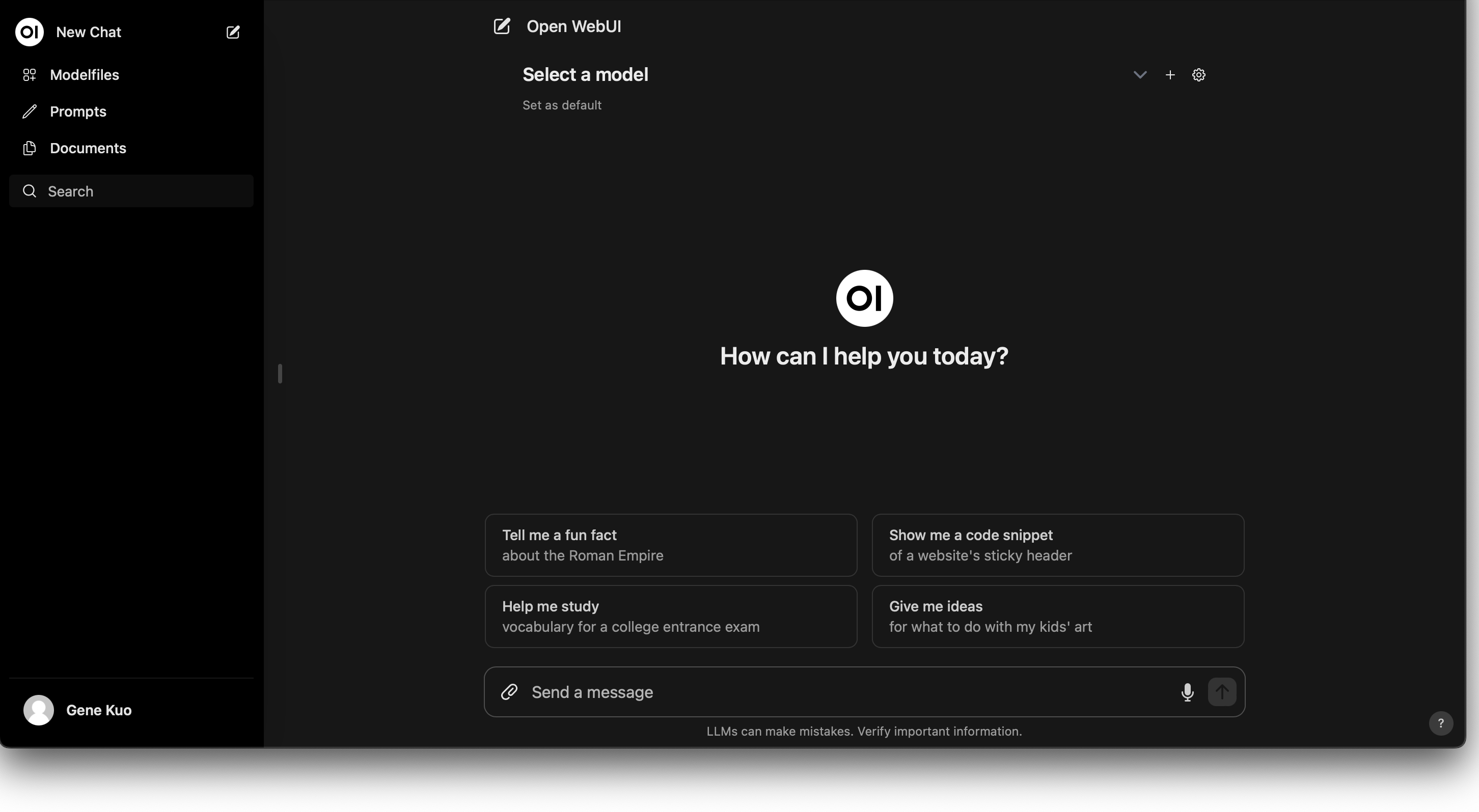

Deploying an LLM Chatbot on Kubernetes Using Nvidia GPUs

With the rapid advancement of AI technology, an increasing number of enterprises and developers are looking to integrate Large Language Model (LLM) chatbots into their systems. This guide demonstrates how to utilize Nvidia GPUs in a Kubernetes environment to deploy a high-performance LLM chatbot, detailing every step from installing necessary drivers and tools to the final deployment.

How to Monitor Container PSI Information

Introduction: In the previous article, we explored PSI (Pressure Stall Information) and how to monitor system-level PSI data. This article delves into how to monitor PSI metrics for a single container.

Interpreting and Applying Linux PSI (Pressure Stall Information) Metrics

Preface: When contention occurs for CPU, memory, or I/O devices, workloads experience latency spikes, throughput loss, and the risk of OOM kills. Without accurate measurement of such contention, users are forced to either conservatively utilize hardware resources or risk frequent interruptions caused by overcommitment. Since Linux kernel 4.20, the Linux kernel has included PSI (Pressure Stall Information), allowing users to more precisely understand the impact of resource shortages on overall system performance. This article will briefly introduce PSI and how to interpret its data.

Deploying Charmed Kubernetes with OpenStack Integrator

Preface: Charmed Kubernetes is a Kubernetes deployment solution provided by Canonical that allows deploying Kubernetes to various environments via Juju. This article will introduce how to deploy Charmed Kubernetes on OpenStack and leverage the OpenStack Integrator to utilize OpenStack-provided Persistent Volumes and Load Balancers for Kubernetes.

- Cloud

- ...

Rapid Kubernetes Cluster Deployment: A Practical Guide Using kops on OpenStack

Kubernetes offers multiple deployment options, and among the many tools available, kops stands out for its ease of use and high level of integration. This article will provide an in-depth introduction to the kops tool and guide readers through the practical steps of quickly establishing a Kubernetes cluster in an OpenStack environment.

How to Choose the Right SSDs for Ceph

As SSD prices continue to fall, many tech enthusiasts and enterprises are considering using Ceph to build SSD-based storage pools in pursuit of higher performance. However, selecting the right SSDs is critical to ensuring Ceph achieves outstanding performance. In this article, we will explore how to choose suitable SSDs for Ceph.

AMD GPUs and Deep Learning: A Practical Tutorial Guide

In the past, it was often said that using AMD GPUs for deep learning software was very troublesome, and users with deep learning needs were advised to purchase Nvidia graphics cards. However, with the recent popularity of LLMs (Large Language Models), many research institutions have released models based on LLaMA, which I found interesting and wanted to test. Since the graphics cards I have with larger VRAM are all AMD, I decided to try using these cards to run them.

- Cloud

- ...

Introduction to Kubernetes Cluster-API

Kubernetes has been evolving in the cloud-native world for many years, leading to the development of numerous projects for managing cluster lifecycles, such as kops and Rancher. VMware initiated a project called Cluster-API to manage other Kubernetes clusters by leveraging Kubernetes' own capabilities. This article will provide a brief introduction to the Cluster-API project.